How to Adopt AI Securely in Your Business

Businesses adopt AI securely by first identifying where AI is already being used, then setting an employee AI policy, approving tools and use cases, protecting sensitive data, assigning governance ownership, and reviewing results over time. Secure AI adoption starts with visibility, rules, and approved use, not with buying more tools.

For executives, the issue is not whether AI will show up in the business. It already has. The real question is whether your organization can use it in a way that preserves control, supports good judgment, protects sensitive information, and creates clear accountability.

A secure business AI strategy is less about technical novelty and more about disciplined rollout. When leaders approach AI this way, they can support productivity without turning tool adoption into a governance problem.

Why AI adoption needs a security plan first

AI changes how information moves through your business. It can affect what employees share, how work is produced, which vendors touch business data, and how decisions get made. That makes security planning a starting point for adoption, not a late-stage cleanup step.

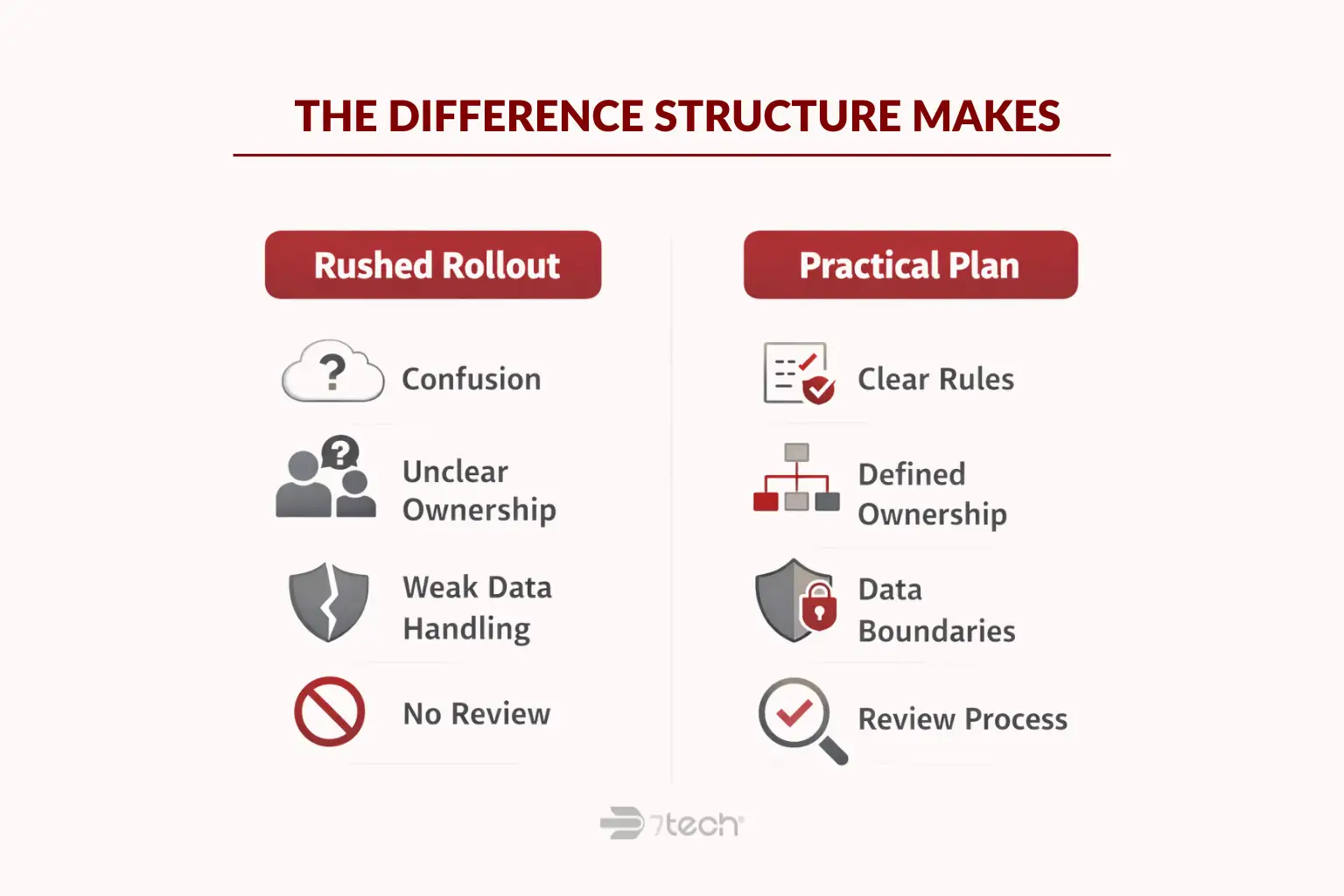

For executives, this is a business-readiness issue. AI can influence confidentiality, compliance posture, workflow consistency, and reputational exposure. A rushed rollout may create speed in the short term but confusion in ownership, data handling, and review.

A practical plan makes adoption easier to defend. It helps leadership explain which tools are allowed, what data is off-limits, who approves new use cases, and how outputs should be reviewed. That is far more sustainable than letting each department build its own rules by habit.

Government guidance is moving in the same direction. NIST’s generative AI risk management profile emphasizes governance, defined responsibilities, and ongoing risk management. The NCSC guidance for leaders on AI and cyber security also frames AI as a leadership and management issue, not just a technical one.

What shadow AI means for your business

Shadow AI means employees are using AI tools for work without formal approval, visibility, or guardrails. In plain language, it is AI use that has entered the business faster than policy and oversight.

That can happen in very ordinary ways. A team member pastes meeting notes into a public chatbot to summarize them. A manager uses an unapproved writing to draft client communications. A finance employee uses AI to rework internal data into a report without understanding where that data goes or how the output should be checked.

The issue is usually not bad intent. Most employees are trying to save time, improve writing, or move faster. The problem is that leadership may have no reliable view of which tools are in use, what information is being shared, or whether anyone has evaluated risk, permissions, privacy terms, or output quality.

That visibility gap is why shadow AI matters. If leaders cannot see current use, they cannot govern it.

The risks of adopting AI without visibility or rules

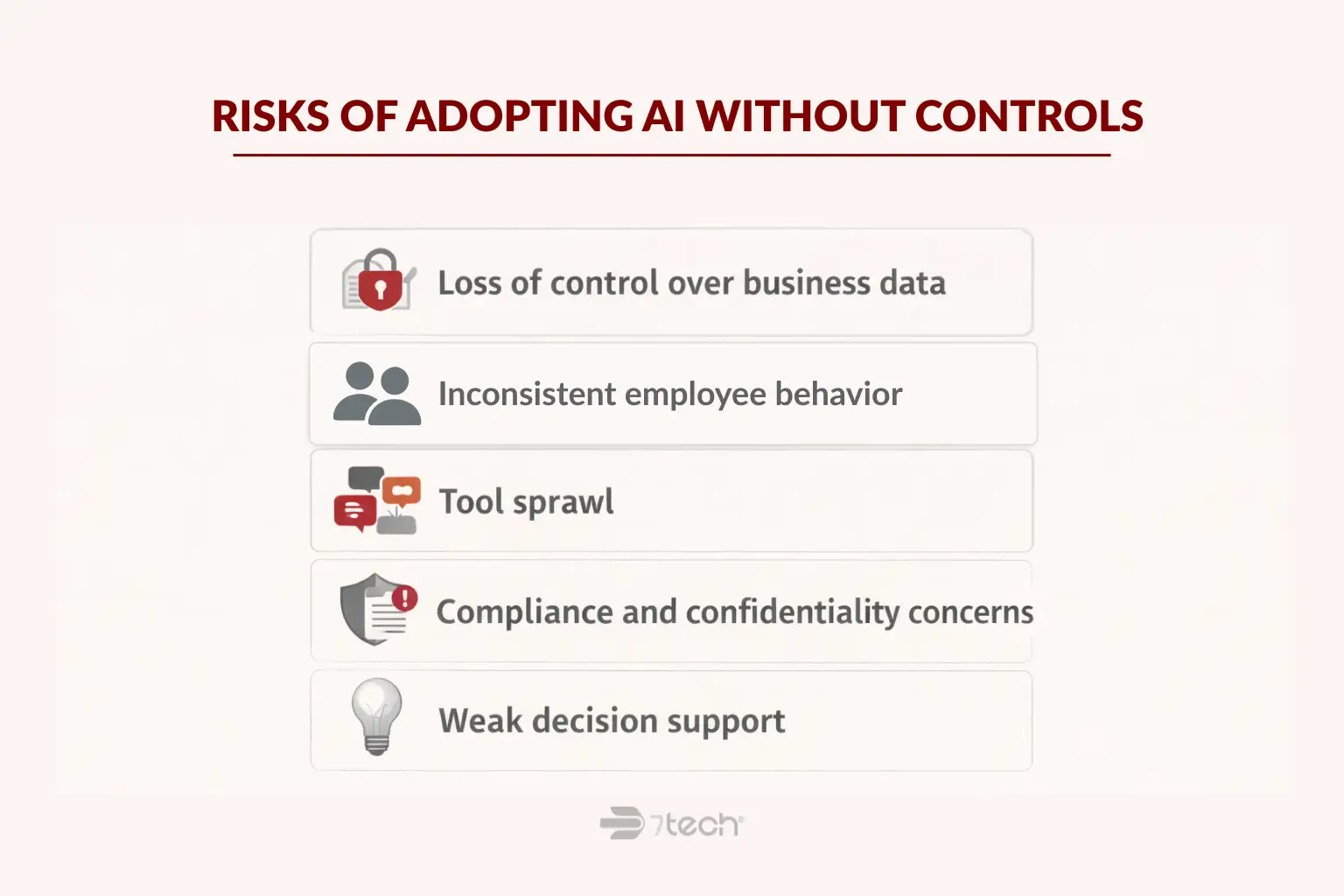

When AI adoption happens without structure, the risks tend to look familiar to executives. They show up as loss of control, inconsistent behavior, unclear ownership, and preventable exposure.

- Loss of control over business data: Employees may enter confidential, regulated, or client-related information into tools that were never approved for that purpose.

- Inconsistent employee behavior: One team uses AI responsibly, another uses it casually, and no one is working from the same rules.

- Tool sprawl: Different departments adopt different s, subscriptions, and workflows with no central review.

- Compliance and confidentiality concerns: Data handling may drift away from internal standards or external obligations.

- Weak decision support: AI output may sound polished while still being incomplete, inaccurate, or unsuitable for business use without human review.

These are not theoretical concerns. They connect directly to executive accountability. If a sensitive document is shared in the wrong system, or a business decision relies on unreviewed AI output, the problem quickly becomes operational and reputational.

The CISA guidance on AI data security reinforces the importance of protecting the data that supports AI-related processes. The FTC has also warned organizations to uphold privacy and confidentiality commitments when using AI in business operations.

How to adopt AI securely in your business

The most practical approach is sequential. Start by understanding current use, then establish clear guardrails, approve business use cases, protect sensitive data, assign ownership, train employees, and review results over time.

Start with visibility before rollout

Before expanding access, find out where AI is already being used. Ask department leaders what tools employees are relying on, what they use them for, and what data is involved. Look for unmanaged use before approving new subscriptions or launching a formal business AI rollout.

This step helps leadership distinguish between low-risk experimentation and higher-risk behavior. It also makes the next policy decisions more realistic, because they are grounded in actual workflows instead of assumptions.

The NCSC’s executive guidance supports this visibility-first approach. If you do not know where AI is present, you cannot set practical controls around it.

Set a simple AI policy employees can follow

An AI policy for employees should be clear enough to guide behavior in everyday work. It should define approved uses, prohibited uses, review expectations, and what information should never be entered into an AI tool.

Keep it plain-language. If employees need a lawyer and a decoder ring to understand it, they will not use it.

A usable employee AI use policy often includes:

- Which tools are approved for work

- Which uses are acceptable, such as summarization, drafting, or brainstorming

- Which uses require review or approval

- What data is prohibited from entry

- When human review is mandatory before output is used externally or operationally

This is where privacy and confidentiality commitments matter. The FTC’s business-facing guidance is a useful reminder that organizations should align their AI use with what they promise customers, employees, and partners.

Approve tools and use cases

Secure AI adoption does not require saying yes to everything. It requires controlled standardization. An approved-tool list gives employees a clear lane and reduces the temptation to choose whatever public tool appears fastest in the moment.

It also helps to separate low-risk use cases from higher-risk ones. For example, drafting an internal outline is different from analyzing sensitive contract language, processing regulated information, or generating work that affects legal, financial, or compliance outcomes.

Executives do not need to approve every prompt. They do need a process that defines which tools are allowed, which use cases are acceptable, and when additional review is required.

Protect sensitive data and access

Data rules should be explicit. Employees should know what must never be entered into AI tools, including confidential client information, regulated data, internal financial details, credentials, protected health information, legal strategy, or other sensitive business content unless a specific approved environment and process exists.

Access should also follow role and business need. Not every employee needs the same AI permissions, and not every team should be allowed to use the same tools in the same way.

In practical terms, leaders should review:

- What data types are restricted

- Which users can access approved AI tools

- How identity, authentication, and permissions are managed

- Whether vendor privacy and data handling terms have been reviewed

- How AI-generated output is reviewed before use

This is where CISA guidance and FTC privacy expectations become especially relevant. Secure AI tools for business should fit your data rules, not force you to relax them.

Assign ownership and governance

AI governance for business should not sit with IT alone. Security, compliance, operations, HR, legal, and executive leadership may all have a role depending on the organization.

At minimum, someone should own:

- Policy updates

- Tool approvals

- Risk review

- Data handling rules

- Training expectations

- Periodic reporting to leadership

This is where many businesses lose momentum. They treat AI implementation for business as a software decision, when it is really a business operating decision with technology implications.

NIST and NCSC both support the idea that defined roles and oversight are essential. Governance is what keeps early experimentation from turning into unmanaged business dependency.

Train employees on safe use

Training should focus on behavior, not hype. Employees need to understand when AI is helpful, when it is not, what should never be shared, and why human judgment still matters.

Good training is practical. It shows examples of acceptable use, prohibited use, and situations where AI output must be reviewed carefully before it affects a customer, a financial decision, a policy statement, or a regulated process.

The goal is not to make everyone an AI specialist. It is to make safe use normal and repeatable.

Review results and adjust controls

Responsible AI adoption is not a one-time setup. Usage expands. New tools appear. Departments find new use cases. That means governance needs periodic review.

Leadership should check what is working, where use is expanding, what new risks are showing up, and whether policy guidance still matches real behavior. Approved tools, training, access, and review standards may all need adjustment over time.

Mature AI risk management treats governance as ongoing management. That is how businesses keep AI useful without letting it become another blind spot.

What a practical AI governance framework looks like

An AI governance framework does not need to be bureaucratic to be effective. For most businesses, it can be a usable operating model with six parts:

- Visibility: Know where AI is being used and by whom.

- Policy: Give employees clear rules they can follow.

- Approved tools: Standardize what is allowed.

- Data rules: Define what can and cannot be shared.

- Ownership: Assign responsibility for approvals, review, and updates.

- Training and review: Reinforce safe use and improve controls over time.

That is what a practical framework looks like in real business terms. It creates guardrails without forcing the organization into unnecessary complexity.

NIST’s AI risk management guidance is useful here because it frames governance as an ongoing discipline. That aligns with how executives already manage risk in other areas of the business: clear standards, defined ownership, periodic review, and accountable decision-making.

Common mistakes businesses make when adopting AI

- Starting with tools before policy: Fast adoption without rules usually creates rework later.

- Letting unapproved use spread quietly: Shadow AI often becomes normal before leadership notices it.

- Writing vague employee guidance: If the rules are abstract, employees will improvise.

- Treating AI as only an IT decision: Governance, data handling, and workflow ownership reach beyond technology teams.

- Skipping training and review: Policy alone does not shape day-to-day behavior.

- Over-focusing on hype: Executive value comes from control, clarity, and useful implementation, not novelty.

These mistakes are common because AI enters businesses quickly and informally. The fix is usually not bigger theory. It is better structure.

Frequently asked questions

What does secure AI adoption mean for a business?

It means using AI with clear rules, approved tools, data protections, defined ownership, and review processes so the business can gain value without losing control.

What is shadow AI?

Shadow AI is employee use of AI tools for work without formal approval, visibility, or governance. It often develops quietly through everyday productivity tasks.

Why does AI need an employee policy?

An employee policy turns leadership expectations into practical guidance. It helps staff understand approved use, prohibited use, and what information should never be shared.

Should every employee be allowed to use AI tools?

No. Access should depend on role, business need, and the sensitivity of the work involved. Different teams may need different tools, permissions, and review standards.

What should businesses avoid sharing with AI tools?

Businesses should avoid entering confidential, regulated, client-sensitive, credential-related, financial, legal, or otherwise restricted information unless a specifically approved process and environment exists.

Who should own AI governance in a business?

AI governance should be cross-functional. IT may support implementation, but leadership, security, compliance, operations, HR, and legal often need shared ownership.

Your First Step Toward Secure AI Adoption

The first step is not choosing more AI tools. It is getting clear on where AI is already being used, what risks exist today, and what guardrails your business needs before adoption expands.

That kind of clarity helps executives make defensible decisions. It also makes AI rollout more practical for employees, more manageable for IT, and more aligned with security, compliance, and operational continuity goals.

7tech approaches this work with the same executive-first posture it brings to managed IT, cybersecurity, compliance support, business continuity, and strategic IT leadership: calm guidance, clear structure, and no pressure to overcomplicate the answer.

“Their team provided clear guidance, breaking down the complex steps and helping us stay on track without unnecessary stress or confusion.”

Donald Henderson, IT Support Specialist, Southwest Electrical Contracting Services

If your organization wants a clear starting point for secure AI adoption, governance, and ongoing management, the next step should feel straightforward.

Book a Secure AI Adoption Discovery Call with 7tech

Get a clear first step for secure AI rollout, governance, and management.

Neal Juern, CEO of 7tech, helps business leaders take control of their IT and strengthen cybersecurity without the complexity. Known for his straight-talk, business-first approach, Neal has guided hundreds of executives toward smarter, safer operations through Managed IT Services and Managed Security Services that make sense to people outside the IT department.